According to a number of analysts, Europe lags behind its main competitors China and the US when it comes to business models based on innovative technologies. But at least it is one breast ahead in regulating them. The reason is the Artificial Intelligence Act (AI Act).

We are following its development at H512.com, and our Job Board has job listings related to AI.

The first-ever legal framework regulating this technology came into force on 1 August this year, but most provisions will apply from 2026. Its aim is to restrict AI processes that carry unacceptable risks, set clear requirements for high-risk systems, and impose specific obligations on their implementers and providers.

“The AI Act regulates up to the level of high-risk systems and prohibits all others above it. Companies that offer platform development services above that level are in violation. Those who use them – ditto. High-risk systems are systems where we have big data, create a sequence of algorithms and look for a certain result,” explains Dr. Galya Mancheva, founder of Ai Advy consulting company and researcher of the regulation.

The legislation operates with one key term – AI systems, which is understood as “amachine-based system designed to operate with varying levels of autonomy…”,as described here.

AI Act and the risk-based approach

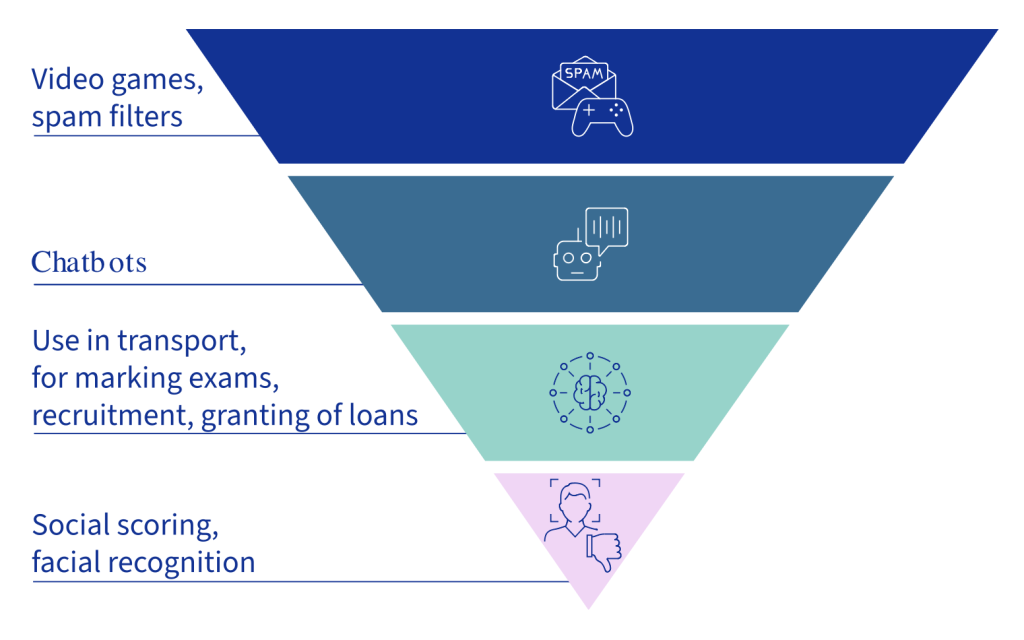

In general, the EU regulatory framework divides AI platforms into four levels of risk. At the top of the pyramid are those that may lead to unacceptable consequences, threatening people’s safety, livelihoods and rights. An example is the social rating systems used by some governments. Such technologies are completely prohibited.

Source

AI systems for remote biometric identification of individuals in public places also belong to this group, with an exception here for situations where a targeted search is being carried out for potential victims of crime.

High-risk AI systems are placed second , and it is these that are the focus of regulation. The EU points out that this group includes technologies related to critical infrastructure management processes, credit and exam assessments, recruitment procedures, judicial decisions, automated visa application processing, etc.

Before they are brought to market, they must be subjected to adequate risk assessment to ensure a high level of security and accuracy. In addition, they must be subject to appropriate human supervision measures and traceability.

This is where Generative Artificial Intelligence (GenAI) or chatbots, which we are all familiar with,come in . Although it is this that has been at the heart of the strong development of the technology in recent years, the European Commission places it in the limited risk group.

“In 2023, when we were all expecting the AI Act to officially come into force, came the ChatGPT boom. The EC revised the regulation but did not put it in this group, as the logic and the way of creating generative AI are very different from those of high-risk systems,” points out Dr. Galya Mancheva.

One of the most popular chatbots in the world already has 200 million active users on a weekly basis. Its creators at Open AI previously said they support the European law and are ready to comply with it, “not because it is a legal obligation, but also because the purpose of the law coincides with their mission to develop and deploy safe AI for the benefit of all humanity”.

The AI law introduces transparency obligations on high-risk systems, and providers must ensure that AI-generated content can be identified as such.

Fourth are AI systems with minimal risk and those that pose no risk at all. This includes, for example, spam filters and video games with embedded AI.

AI Act and business

When she started researching the upcoming legislation in 2021 and sharing her knowledge, Ai Advy’s founder encountered a lack of understanding from the business community. Then it’s something distant, futuristic and companies refuse to hear what it’s about. In scientific circles there is also some mistrust.

“At the time, business wasn’t paying attention. These are things we start thinking about when they get too close to us. In marketing, for example, we follow trends, we want to keep up with them, we want to use the latest waves. But regulations make companies feel uncomfortable and they put off action until literally the last minute,” says Dr Mancheva of her experience.

H512.com sought the opinion of several companies, some of which did not respond to our queries related to the law.

However, the opposite is the case with the Bulgarian Accedia and the American DataArt, which deals with custom software development. Artificial intelligence systems are one of its core services, and they have been developing AI/ML models in key sectors such as finance, media, tourism, retail and healthcare for several years.

“Since 1 August 2024, when the AI Act came into force, we have made several important changes to ensure compliance with the new regulations. For example, we are implementing a risk-based approach to categorising AI systems. High-risk systems undergo thorough review and approval, and unacceptable ones are banned from use in our operations,” says Dmytro Baykov, CTO of AI Labs at DataArt.

To prepare for the new regulations, the company is taking several key steps. First, it conducted a thorough analysis, after which the necessary changes were identified and a plan for their implementation was drawn up.

“After implementing the changes, we conducted team training to ensure everyone was aware of the new requirements. We updated our internal policies and procedures to comply with the requirements of the law. At the same time, we made adjustments to ongoing AI projects to meet the new standards,” Baikov lists.

Artificial intelligence is also a key part of the solutions of one of the largest Bulgarian IT companies – Accedia. Although their projects are B2B-focused and not related to the processing of personal data of end-users, they are also closely following the development of the regulatory framework in the field.

Therefore, they review their projects and methodologies and make sure they comply with the law and standards. “In addition, we have implemented quality control and cybersecurity practices that ensure the reliability and safety of our systems when they are used for our clients’ needs,” Dimitar Dimitrov, managing partner at Accedia, told H512.com.

Although the AI Act does not require specific changes to the training and certification of their teams, the company keeps its consultants informed about new regulations and best practices when creating AI solutions.

This includes training on the ethical use of AI that meets standards for transparency and accountability in creating innovative and reliable business solutions.

“It is most important for companies to understand if they fall under the AI Act. Whether they are working with high-risk systems. If the answer is no, they don’t need to do anything. However, if they are working with such platforms, an audit should be done,” recommends Dr. Galya Mancheva.

And the audit in question goes through several levels – if the company meets the minimum requirements, one is done in terms of the full criteria. But AI processes that do not meet the requirements should be taken out of use. Otherwise, companies face fines of up to 7% of their global revenue, or €35 million, whichever is higher, as indicated here.

Explore more

Incentive or disincentive?

Having said all this, the question is whether the AI Act hinders or helps the development of artificial intelligence in Europe.

“There is a risk that overly stringent regulations could slow down some innovation by imposing significant compliance requirements on companies. However, I believe the AI Act will encourage responsible innovation rather than slow it down,” commented Dmytro Baykov of DataArt.

He said the EU is building a robust regulatory framework that encourages companies to prioritise responsible AI practices. It also sets clear standards for transparency, accountability and ethics.

DataArt is among the companies with AI-related services in its portfolio. This is also the case with Accedia, where they also believe that the AI Act has the potential to positively impact innovation by encouraging the development of ethical and responsible AI solutions.

“Having clear regulatory frameworks in place creates trust in new technologies, providing protection for end users and encouraging companies to act transparently and responsibly. This would help Europe establish its place as a leader in innovation, while protecting the public interest and driving the sustainable development of AI,” Dimitrov notes.

The founder of Ai Advy, Dr. Galya Mancheva, is also looking in this direction. In her words, the key goal of this legislation is to take Europe to the next technological level.

“The US and China will continue to lead, but with the AI Act Europe is trying not to fall further behind, but to start moving. So far it has stood still and others have moved forward. This legislation aims to protect the rights of people who may be affected by the use of high-risk systems, on the one hand. On the other hand, to make sure that the companies themselves know that what they are doing is in the right direction,” she concluded.

Publish date: 20 November, 2024

Publish date: 20 November, 2024